How to Commission AI-Assisted Video Safely

Last updated: March 12, 2026

Commissioning AI-assisted video can feel like normal production right up until something gets awkward. A platform wants disclosure. Someone asks where a look came from. A re-edit is needed and nobody can find the project files. None of that is rare. It's just easier to stumble into when a lot of creative work sits inside prompts, model settings and iterations that never appear in a traditional brief.

This is workflow guidance, not legal advice. Rules and platform policies vary, so for higher-stakes campaigns or real disputes, professional advice can be sensible.

Start with a decision gate, do you even need AI?

AI is a tool, not a requirement. Sometimes it's a strong fit, especially for stylised b-roll, controlled variation, synthetic cutaways or rapid concepting. Other times it adds risk and approval friction without improving the final work, and that's worth admitting early rather than defending later.

A quick decision gate helps you make that call without turning it into a debate about taste.

What job is AI doing here that a conventional workflow wouldn't do as well

Where is the risk surface, meaning realism, likeness, voice, brand cues or sensitive contexts

Is there a hybrid route where AI is used for one narrow piece and the rest stays conventional

If those answers aren't clear, it's often better to keep AI optional rather than making it the whole pipeline. That's not caution for its own sake. It's simply good production judgement.

If consent and disclosure are part of the project, the checks in AI video disclosure and consent checks help keep decisions consistent when the work moves between teams and platforms.

Define deliverables that stay useful after sign-off

Most commissioning problems aren't about taste. They're about handover. The brand gets a file, then later needs a new aspect ratio, a clean audio swap, a localised caption version or a removal edit because a platform or stakeholder flags a shot. If the only deliverable is an mp4, you're back to rebuilding or negotiating under pressure.

It helps to think of deliverables in two layers. The first layer is what gets posted. The second layer is what keeps the project maintainable and defensible, meaning the bits that let someone reopen it, fix it and explain it without guessing. In practice that usually means project files that open without missing media, stems that line up with picture, a clear log for the final approved version, and licences and releases stored next to the assets they cover.

If the goal is to avoid arguments later about what was created, what was licensed, and what was approved, this overlaps with ownership and proof in a very practical way.

The table below is a commissioning spec you can drop into a brief. It's deliberately plain, because it's meant to be used, not admired.

| Spec section | What to write | What to agree upfront | What to store as proof |

|---|---|---|---|

| Project goal and distribution | What the video needs to achieve and where it will run | Platforms, formats, aspect ratios, caption needs, paid or organic use | Final approved brief, channel list, approval message |

| AI use scope | What is generated, what is edited by hand, and what is not allowed | No-go areas like real person likeness, voice cloning, brand lookalikes, sensitive themes | Scope note, restrictions list, reference pack version |

| Deliverables and handover | Exactly what is delivered beyond the final export | Masters, platform versions, project files, prompt log level, model settings, clean stems, licences and releases | Delivered folder manifest, version names, archive location |

| Approval chain | Who can give notes and who can approve the final cut | Single decision-maker, approval checkpoints, what counts as sign-off | Approval messages, dated exports tied to approvals |

| Revision limits and change control | How many rounds and what becomes a change request | What is a round, how notes are consolidated, what triggers extra cost or timeline shift | Change log, revision summaries, updated brief versions |

| Transparency and documentation pack | What gets logged, what gets archived, and for how long | Prompt versioning for approved cut, settings summary, source asset licences, releases, retention period | Documentation pack folder, dated log, licences and releases alongside assets |

| Platform plan and response window | Who posts, who responds, and how quickly documentation is supplied | Account owner, disclosure responsibilities, response window for labels, rejection, demonetisation, or claims | Posted URLs, screenshots, timestamps, platform notices and responses |

| Reuse rules | What the brand can reuse and what the creator can reuse | Reuse of outputs, project files and stems, whether prompts and settings are reusable, limits by campaign or category | Reuse permissions clause, handover receipt, archive index |

Lock revision, reuse and transparency rules before the first render

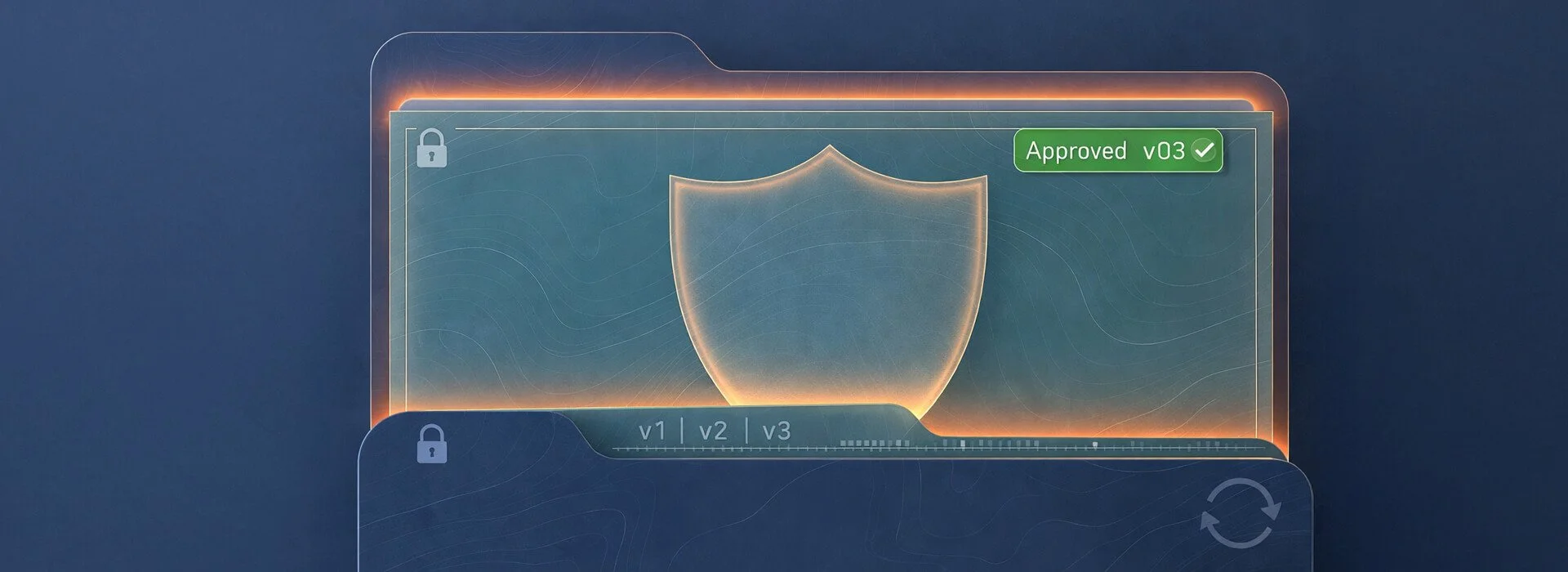

Treat approvals like version control, one approver, one consolidated notes round, one signed-off export.

Most projects don't go sideways because someone had bad intentions. They go sideways because nobody defined how decisions get made, and AI makes it too easy to keep exploring when the project actually needs to converge.

A balanced approach usually covers these areas.

One round equals one consolidated set of notes from one named approver

Notes that change the brief are treated as change requests

Approvals happen at checkpoints, not only at final export

Reuse rules matter for AI work because it's easy for methods and outputs to blur together. A simple approach is to decide what the commissioner can reuse, meaning approved cuts, platform variants, project files and stems within the agreed scope, and what the creator can reuse, meaning non-identifying workflow patterns where no confidential material travels into another job. Prompts and settings should be treated explicitly so everyone knows whether a summary is enough or whether full reproducibility is expected.

Transparency is the part that protects brands when things get political. A project can be commissioned in good faith and still trigger platform penalties or reputational blowback if someone claims the output resembles another creative source. As detection systems evolve, it's plausible that more similarity gets flagged, and the first impact can be reduced reach or removals rather than a neat dispute you can schedule time for.

A workable process is light but deliberate. Ask for a short summary of references used, do a final pass for unintended resemblance, store approvals against the final cut, and agree who responds first and how quickly the documentation pack can be supplied. If a specific shot is too close for comfort, swapping a version is often a cleaner outcome than trying to win an argument in public.

This gets even more important when an agency is commissioning multiple creators against a shared asset pack. The winning move is consistency, not control. Use one commissioning spec, keep the agreed log level consistent across creators, make approvals traceable per cut, and agree one platform plan so disclosure and response steps don't drift across uploads.

Agree platform penalty risk and a takedown response plan

The platform layer is where commissioning choices meet reality. Even strong work can get labelled, limited or challenged, and you don't want the first time you discuss responsibilities to be after the upload is already in trouble.

On YouTube, it's worth getting familiar with how the platform expects creators to handle altered or synthetic material. The YouTube altered or synthetic content disclosure guidance sets out how that works during upload.

TikTok has also pushed creators towards clearer signalling for synthetic media. The TikTok labels for disclosing AI-generated content is a good starting point for what the platform is trying to achieve.

If you want a cautious technical view that doesn't oversell what provenance and detection can guarantee, the NIST overview on reducing risks from synthetic content is a grounded reference. It explains approaches like provenance, labelling, watermarking, and detection, and it's very clear about trade-offs and limitations.

A response plan that keeps options open is boring on purpose.

Capture evidence, links, screenshots, timestamps, and the exact posted file

Pause further uploads until the issue is understood

Assemble the documentation pack for the approved cut

Respond through platform processes first, using factual language

Swap a version if needed, rather than arguing in public

Escalate if there's real harm, especially where a person's likeness or voice is involved

Key takeaways

The safest commissions are the ones that make the invisible parts of AI-assisted production visible enough to manage, without turning the project into admin.

Decide whether AI is actually needed, and keep it optional if it isn't

Define deliverables beyond the export, including project files, stems, settings and a usable log level

Agree revisions, reuse and transparency rules early, before exploration turns into scope creep

Treat similarity claims and brand penalties as operational, not theoretical

Assign platform responsibilities and a response window, then keep a tidy documentation pack ready

Following these steps doesn't slow production down. It makes the final video easier to use, defend and build upon, whether you're a brand commissioning work or a team delivering it. The result is usually higher trust, fewer surprises and work that travels better across platforms and over time.