AI Consent and Likeness Use in Film Production

Last updated: March 26, 2026

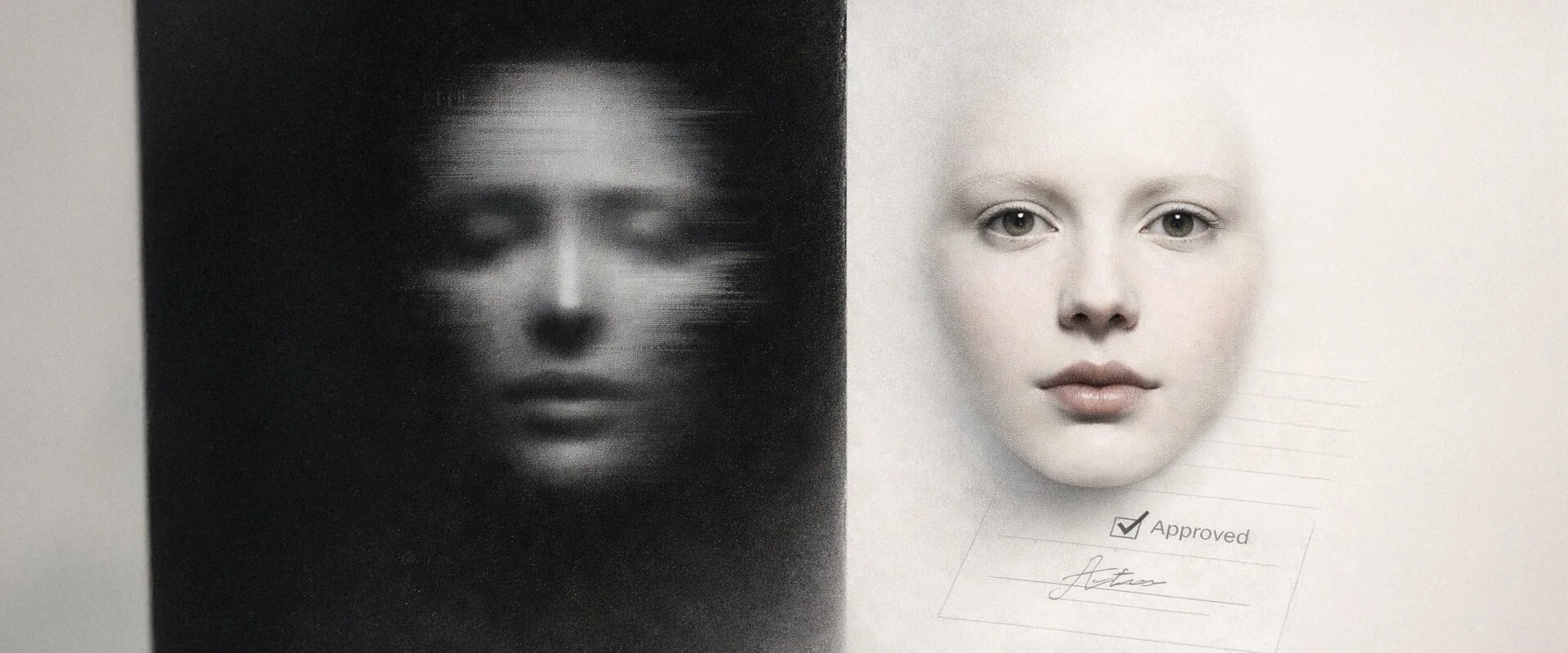

When a project involves AI, a real person’s face or voice can move from a creative choice to a trust problem very quickly. A synthetic version that looks or sounds like someone identifiable can imply endorsement, shift meaning, or trigger complaints even when nobody intended harm. Clear consent keeps projects stable, protects relationships, and gives you options when questions land later.

This is workflow guidance, not legal advice. Rules around likeness, voice, and personal data vary by country and context, so for commercial campaigns or real disputes, professional advice can be worth getting.

When consent becomes essential in AI-assisted production

Traditional filming usually requires consent for the specific shoot and use. AI changes the picture because a likeness or voice can be generated or altered long after any original recording, and it can be reused or repurposed in ways the person didn't anticipate. Brands and creators often work with corporate speakers, testimonial-style videos, or promotional content where the person is recognisable and the stakes around implied endorsement are high.

The difference shows up in practice. A short synthetic clip of a CEO might look polished, but if it appears in an unexpected context or is re-edited without permission, the fallout can be reputational before it becomes legal. Major studios increasingly treat synthetic replicas of talent as a clearance issue that needs explicit approval, and in the UK existing routes such as passing off or data protection can apply when consent is missing.

A few triggers usually tell you when consent needs to be treated as a priority.

The person is recognisable, even if the output is partly synthetic

The voice is used, cloned, or strongly imitated for dialogue or narration

The content could reasonably be read as endorsement in a corporate or brand setting

The project is likely to be cut into multiple versions across platforms and placements

The material may be repurposed later, such as internal training, recruitment, or future campaigns

If your project touches personal data, it helps to align with the way UK regulators frame risk and accountability. The ICO guidance on AI and data protection is a solid baseline for how fairness and transparency get assessed in practice.

If you want a UK policy reference that shows how the broader debate is being framed, the UK consultation on copyright and artificial intelligence is useful context on where some of these tensions sit.

A practical consent workflow for faces and voices

Consent works best when it’s treated as part of production rather than a final checkbox. If it shows up only at delivery, it tends to feel rushed, and that’s when misunderstandings happen.

The table below turns the workflow into a quick reference you can follow and file, especially when multiple stakeholders are involved.

| Workflow step | What to do | Why it matters | What to store |

|---|---|---|---|

| Identify | Confirm early whether any real face or voice will be used, referenced, or realistically implied. | It prevents late surprises and keeps approvals aligned with the actual risk. | A one line scope note in the brief and the named person or talent reference. |

| Agree scope | Set limitations before any generation, scanning, or cloning happens. | Most problems come from scope drift, not bad intentions. | A short written scope statement including allowed and not allowed uses. |

| Preview | Share a simple preview of the intended style so consent is informed, not abstract. | People agree more confidently when they can see what you mean. | Preview file name and date, plus any feedback or constraints agreed. |

| Approve | Capture written approval for the specific use, including platforms and reuse boundaries. | It gives you clarity when content is repurposed or questioned later. | Signed release or written approval message linked to the project version. |

| Store | Keep consent materials alongside the project files and the approved export version. | You can respond quickly without reconstructing a paper trail under pressure. | Consent record saved in the same folder as the approved export and project files. |

| Reconfirm | Reconfirm consent if scope changes, especially for a new audience, platform, or region. | Consent that made sense in one context can feel wrong in another. | A short scope change note and the updated approval trail tied to the new version. |

One small habit makes a big difference. Tie consent to a version. If the approved cut is v03, the consent record should point to v03, not a vague idea of the project. That keeps approvals clean when edits happen later.

What to capture in releases and approvals

Releases and approvals for AI work need to be more specific than traditional ones because the output can be altered or reused in ways that weren't possible before. The goal is to make boundaries clear enough that everyone understands them, without turning the project into legal theatre.

A simple checklist tends to cover these points.

Name and contact details of the person giving consent

Clear description of the likeness or voice to be used

Specific purpose and context of the use, for example this campaign, these platforms, this duration

Whether the material can be edited, altered, or used to train models

Any restrictions on commercial use, political use, or sensitive contexts

Duration of the consent and any right to revoke

Confirmation that the person has seen examples of the intended style or output

Example consent wording for scope, territory, duration, and reuse

This consent covers use of my face and/or voice in the AI-assisted video project called [Project name]. It may be edited for delivery in [formats] and published on [channels] in [territories] for [duration]. Any reuse outside this scope, including a new campaign, new territory, or a new synthetic performance, needs new written approval.

If the goal is to avoid arguments later about what was created, what was licensed, and what was approved, this overlaps with ownership and proof in a very practical way.

The Netflix Generative AI guidelines for content production also emphasise consent for significant alterations that could affect emotional tone or delivery, and they treat synthetic replicas as needing dedicated review.

Planning for what changes after release

Once a video is public, control changes. Even with clear consent and tidy releases, the media can be copied, re-edited, and reused by people outside the original project. This is not an AI-only issue. Fully human-made footage can still be downloaded and used as input to generate new material, including synthetic voice or likeness, depending on what someone is trying to do and what tooling they have access to.

It’s worth naming the realistic risks plainly, so teams plan for them rather than assuming a signed release closes the story.

The video may be downloaded and re-uploaded elsewhere with a new caption or framing that shifts meaning

Speech can be extracted and used to imitate tone, cadence, or voice

A recognisable face can be re-contextualised, cropped, or composited into a new narrative

Public clips may be scraped or used as training input by third parties, even when policies restrict this, because enforcement can vary

The clip can travel into a context that reads as endorsement or affiliation, especially in corporate settings

A sensible approach is to treat public release as a handover event. That means agreeing a few safeguards in advance, not because they guarantee prevention, but because they make response quicker and calmer if reuse happens.

Avoid publishing clean isolated dialogue tracks unless there is a real need

Keep the approved master export and the exact upload versions, with clear version names

Store consent records alongside the approved version, not just the project in general

Decide who monitors for misuse and how reports get triaged

Agree what happens if a reuse claim lands, including who contacts the person involved and who contacts the platform

Incident response patterns for corporate speaker scenarios

When a real person’s likeness or voice appears in synthetic content without clear consent, the situation is often reputational before it is legal. This is especially common with corporate speakers and brand talent, but the same principles apply whenever an identifiable individual is involved. A calm, prepared response helps protect relationships and limits damage.

A workable process is light but deliberate.

Capture evidence, including links, screenshots and the exact version in circulation

Contact the person or their representative quickly with facts, not defence

Offer to remove or replace the content and provide the documentation pack

Review internal records to confirm what consent was obtained and where any gap occurred

Update the workflow so the same gap cannot happen again

In practice, speed and transparency tend to de-escalate situations faster than an argument about rights. When the response shows that consent was treated seriously, trust is often easier to restore.

When consent is informal or missing

This is one of the most common failure modes in corporate work. Someone gives a quick verbal yes for one internal project, then months later the same face or voice shows up again in a different region, cut, or context. The person feels the use has drifted beyond what they agreed, and the brand can suddenly be dealing with an unexpected complaint.

In practice, this can lead to a few predictable outcomes.

The individual or their organisation may ask for removal or a change on a tight timeline

It can trigger internal review and reputational friction, even if nobody intended harm

In the UK it may be framed through passing off or data protection routes if the use implies endorsement or mishandles personal data

Even when no formal claim is made, trust and future collaboration can be damaged

The simplest way to reduce ambiguity is to treat every use of a real face or voice as needing a written record. A short signed release that spells out scope, territories, platforms, and duration removes most of the grey area before work begins.

Key takeaways

Consent is not just a legal concept. It’s a production safeguard that protects trust when content travels beyond its original context.

Treat consent as part of production, not a final checkbox

Tie consent to a specific approved version, not a vague project idea

Capture clear boundaries in releases, including alteration, reuse and retention

Plan for what changes after release, because third parties can still copy and repurpose fully human-made content

Use a calm incident response pattern that prioritises speed, clarity and relationships

Following these steps does not slow production down. It makes the final video easier to use, defend and build upon, whether you are a brand commissioning work or a team delivering it. The result is usually higher trust, fewer surprises and work that travels better across platforms and over time.